We believe empowering engineers drives innovation.

We believe empowering engineers drives innovation.

What should be done when one’s Terraform turns into technical debt? Table of Contents The Necessity of Infrastructure as Code Platform Engineering Case Study: The Successful Platform Team Debt Collector on Line One Requirements for a Solution Existing Tools Fall Short Airflow: More Than an ETL Tool Bronco: Managing Terraform at Scale with Airflow Terraform Upgrades as Directed Acyclic Graphs Concurrency, Monitoring and Retries The X Factor Summary The Necessity of Infrastructure as Code Cloud computing has fundamentally transformed how computer software is run.

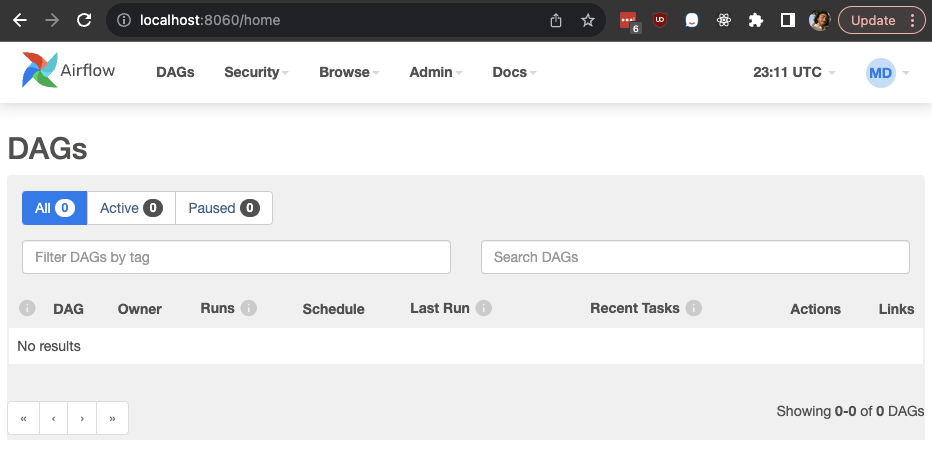

Hello! In this blog post we are going to dive deep on utilizing OpenID Connect (OIDC) and your third Party Identity Provider to authenticate and assign permissions to users signing into Airflow 2.X through the web app UI. Airflow is a platform to programmatically author, schedule, and monitor workflows. It is primarily written in Python, and comes with a web based UI for managing workflows and other UI driven tasks. The Airflow web UI uses Flask App Builder (FAB) as the primary framework and Airflow provides methods for customizing which FAB authentication method will be used.

Apache Airflow is a phenomenal tool for building data flows. It’s reliable, well-maintained, well-documented, and has plugins for just about everything. It’s easy to extend, has pre-built deployment patterns, and is readily available in a variety of cloud environments. However, it’s also an aging tool, and has a few quirks that hearken to an earlier time in its development. Some issues include rigid DAG-centric dataflows, confusing differences between logical runtime and wall-clock execution time, and significant limitations in the size of individual DAGs.

At Rearc, we work with hundreds of datasets sourced directly from various authorities, government agencies, and organizations. A core part of the value we provide to our customers is ensuring that they receive clean and reliable data, no matter what the raw original data looks like. While some of this is accomplished by enforcing data types and strict schemas, errors can easily slip through the cracks. To address these problems, we began investigating solutions for a succinct, self-documenting, self-verifying way of declaring what the output data should look like.